The SLAM frontend, in my definition, finds the features in the image and assigns the same landmark id to matched images.

The overall SLAM performance relies on the quality of observation file provided by the frontend, as well as the optimization problem established by the backend.

Frontend

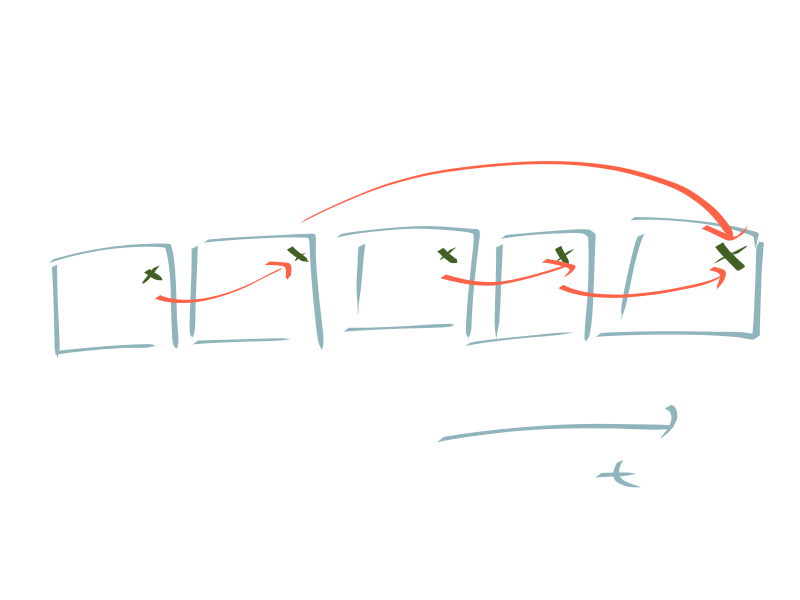

The main challenge in frontend is to assign the same landmark id to all the matched features. The following figure shows a realistic and nonideal case. Even thought these 3 features come from the same landmark, the matches might not be complete. I think a graph structure/algorithm is necessary to fully solve this problem.

Backend

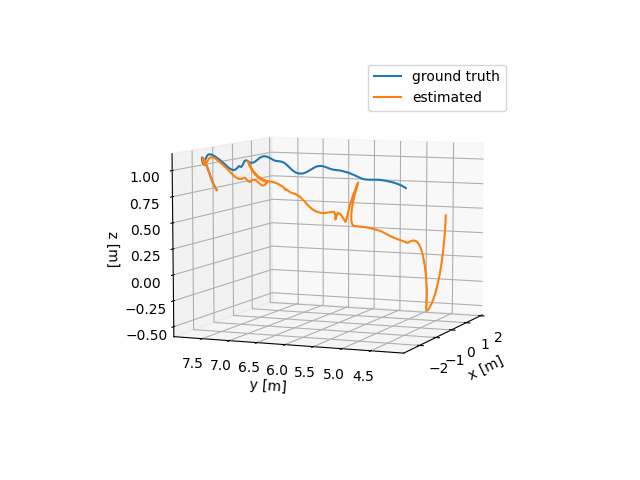

While the trajectory can be initially guesses from IMU dead reckoning, there is no way to initialize 3D landmark positions. Normally, we can initialize landmark positions from the trajectory, which can be generally considered as the triangularization.

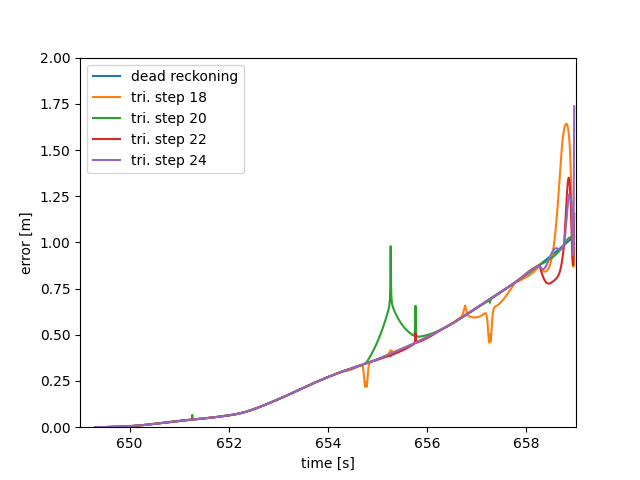

As an optimization process as well, we don't want the triangularization to be fully converged. Otherwise, the result would be biased towards the dead reckoning result. The following figure shows the effect of the triangularization steps for the overall performance.

There are still several things apart from the ideal SLAM result. First, there are only few trajectory points displaced from the dead reckoning result. It might be due to the excessive trajectory points. Second, the final part of the trajectory is largely deviated. This might be due to the lack of loop-closure.

Next steps

- improving the assignment of landmark id to features with graph structure

- implementing the preintegration of IMU data